Learning of View-based Object Representations

|

|

|

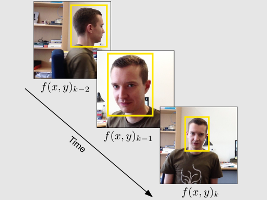

Introduction and OverviewThis work focuses on automatic learning of view-based object representations initialized by a single example (rectangular template or rectangular bounding box) of the object of interest given by a user (semi-supervised) or further algorithms (unsupervised), see Fig. 1 for an overview of the system structure. Based on an integrated scheme of detection and learning, the position of the object is continuously determined while updating the view-based object representation. Traditional object recognition systems require a large amount of training in advance and are generally optimized for a specific scope of application and object class. In comparison, the object recognition system developed in this work is not limited to a specific object class and an extensive training phase in advance is replaced by an online learning algorithm. This allows for interaction with a broad range of further algorithms, for example, artificial visual attention algorithms for the initialization of the integrated scheme of detection and learning or the semantic annotation of the representation learned in order to perform higher order tasks. The object representations can further be exploited for tasks such as long-term tracking, human-computer-interaction, navigation, scene interpretation, etc.

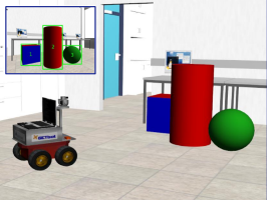

Biological Backgrounds and Feature SelectionThe object learning system developed in this work is motivated by certain biological backgrounds. As the human visual system is capable of learning and recognizing arbitrary objects and object classes, such mechanisms are systematically exploited within this work. The learning and detection scheme is based on local appearance and shape features. Using both types of features is necessary to deal with a broad range of objects (see Fig. 2). By using local instead of global features, partial occlusions and certain object transformations (for example non-rigid objects) can be handled.

Further ObjectivesThe object learning system developed in this work consists of several further components associated with further research questions, for example:Structure of local features: The relation of local features to each other is an important characteristic to be described in order to develop robust object recognition systems. Therefore, in this work, it investigated by which approach and at which depth the relation between local features should be described. The possibilities range from describing no relations at all (for example bag-of-features) to graph-based approaches describing the relation of each feature to all other features. Outlier rejection: Performing an object detection typically involves the examination of a set of several thousand bounding boxes per image. In order to accelerate the detection process, a broad range of these bounding boxes of possible object positions can be rejected based on the analysis of fast to calculate global features (variance, histograms, etc.). By this means, the whole detection process is accelerated. In this work, it is investigated which features and which existing algorithms are suitable for multipurpose object recognition systems. Semantic annotation: A challenging research question addressed in this work is the automatic annotation of the view-based object representations with semantic labels. Given one or several views of an object, the idea is to use a search engine in order to search for visually similar images. The websites containing those similar images are then analyzed by text-mining methods in order to extract semantic labels. ContactDo you have any questions or comments? Please contact: |